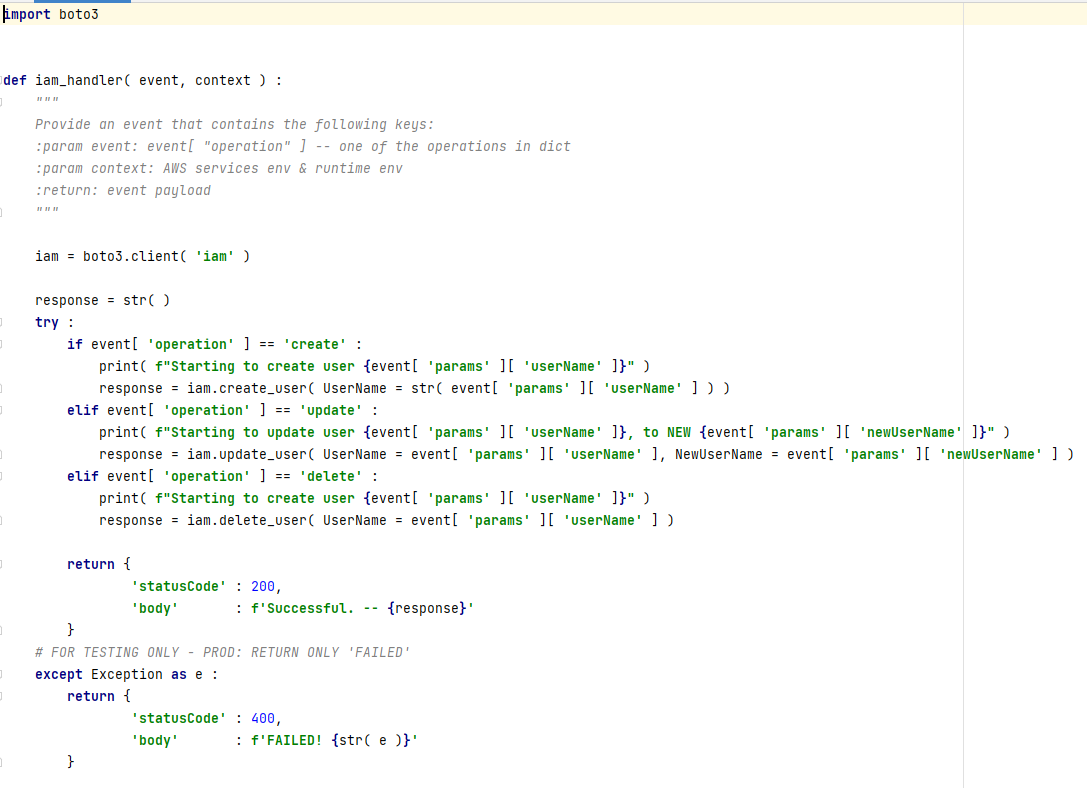

Let’s talk about another interesting case. I could, for example, create a lambda function, which, in turn, does some sort of activity. It’ll be function, created at your system and running with your level of permissions.

For the POC, I’ll make one that simply creates another user upon receiving a specific input.

Is this operation logged? Yes, CloudTrail has “CreateFunction%” event. And there is also “UpdateFunctionConfiguration%” event for updating existent function.

But would it be a good point to catch the attacker? Well, it depends. If your organization has limited use of lambda functions, then this would be abnormal activity and would be easily seen in your logs. Yet, if your organization makes heavy use of lambda, then it is far less likely this will be seen as abnormal and because of the nature of the typical development lambda function we are showing, every development activity will trigger a false alarm. So that could easily be missed by a security team.

Where else can a malicious attacker be caught? Lambda will not work without a role, so one must create a possibility of role assumption, trust relations, and/or attach a corresponding policy to user/group/role.

Assuming role isn’t very rare in AWS. Almost everything is done with assuming roles. Policy attachment – isn’t very rare for development processes too. Of course, if you attach a policy that grants full administrative rights, this might/should trigger an alarm, but you don’t need full administrative rights to function.

Once again, you have events for those actions: AttachGroupPolicy, AttachUserPolicy, AttachRolePolicy.

If you have an already built structure without needing to change it-it’ll be a good point, yet that is not the case in most deployments, because organizations, especially product-oriented, constantly need to develop and evolve.

Thus, you might have a constantly ongoing task of filtering out false positives. As mentioned before, the more false positives are excluded or ignored, the higher the chance of missing a true positive.

Furthermore, if I am updating existing function and not creating one from scratch, chances are it already has permissions to assume role. Naturally I will try to land my hands on the one, that has a role, suitable to my needs.

So, where is a better point to target malicious activity? It’s in the function itself. However, it is a bit tricky, because you need to know exactly what you are searching for and for that you MUST have a certain level of experience with the system.

In the discussed case, I want to stop using stolen credentials since it is potentially a weak point, and the more I use them, the higher is the chance of someone paying attention to that. Why don’t we use another legitimate AWS option to allow another account to invoke our lambda function? Sounds pretty good.

Of course, if you have only one account, then using a different account may trigger an alert – again IF you monitor the accountId field in all logs of AWS API calls. You must have a specific rule for that because the default ruleset will most probably provide just a parsing of that field, if at all.

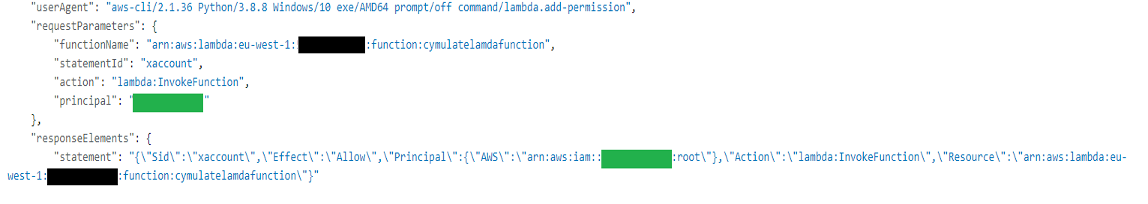

However, there could be multiple accounts in use in large organizations, so all of them need to be monitored. Yet if I’d want to provide an ability to invoke a lambda function to an external account not associated with your organization, then the event name for that action will be AddPermission20150331v2, combined with “xaccount” string in the statement field and “lambda.amazonaws.com” as a source. Without knowing those specifics, the event could easily be missed.

The relevant AWS command is

AWS lambda add-permission --function-name <function- ARN> --statement-id xaccount --action lambda:InvokeFunction --principal <external account ID>

This is actually the last place the SOC operator can target the attacker before executing the function from an external account. That is the best place to put a fingerprint on. Other activities with lambda also have that special place, but I can not stress it enough-logging must be configure properly.

Next phase of a discussed attack is invocation of a function from an external account

During that phase, the event name will be the same AddPermission20150331v2, the action will be the same as any invocation of lambda function, and there could be a lot of them. However, the statement will explicitly contain the external account the function was invoked from.

Here’s how it looks in CloudTrail

The[AS1] location of your organizational accountID is colored black, whereas the external/attacking accountID is in green.

You could catch this event with event of AddPermission20150331v2, combined with regular expression, that can point to a description field (.*Sid.*xaccount.*,.*Effect.*:.*Allow.*,.*Principal.*:{.*AWS.*:.*arn:aws:iam::.*},.*Action.*:.*lambda:InvokeFunction.*,.*Resource.*:.*arn:aws:lambda:.*:function:.*}").

However, as mentioned previously and as you can see from the log, there is not much difference between this one and the regular lambda function invocation. Therefore, the rule, based IOBs, related to lambda invocation needs to be run in correlation IOBs, from a previous event in chain – one where external account receives permissions to invoke lambda. Chaining those two events is my second-best choice to create a good security logging for the discussed above attack.

In this specific example I’ll create an IAM user, sending an invocation from an external account, like this:

aws lambda invoke --function-name <function-ARN> --payload $EncodedText response.json , where EncodedText variable holds a base64-encoded json of creating an IAM user, named “Cymulateattacker” '{"operation":"create","params":{"userName":"Cymulateattacker"}}'

Of course, should I create a function that does something different, then creating an IAM user, the result would be different too and the keywords, used in a security alert would also differ.

How could you know when to implement what and how? Well, here is where practical knowledge of offensive activity may help. If you will know how to attack, you will know how to defend.

In conclusion, I can say that any misconfiguration in the logging, parsing, and targeting specific fields can allow an attacker to perform anything he wants, while it can even be seen as a totally legitimate operation. The above scenarios can be alerted on with the proper implementation of logging using supporting Sigma rules.

**Both scenarios and Sigma rules are available to you in CSPM templates or as a standalone in the Advanced Scenarios section of Cymulate Extended Security Posture Management Service. You are encouraged to run the scenarios and utilize Sigma rules to see if your security solution provides you the required insight to monitor those activities effectively.